Why Platform Teams Struggle to Prove Their Impact

The hardest part of running a platform team is explaining it in a way that the rest of the leadership, including general partners and limited partners, can understand and appreciate the value of the day-to-day operations. Ask any head of platform, and they'll tell you dashboards, tools, and networking events are much easier today than they were a decade ago, but that doesn't mean everyone in leadership intrinsically understands the value and platform operations.

That sounds odd at first because platform teams are deeply embedded in the business development of their portfolio founders.

They support founders in choosing third-party service providers, introduce tools, support key initiatives, and guide organizational decisions that shape how the company scales. In many cases, they sit closest to the patterns that emerge across a portfolio, and they know what works, what doesn’t, and where teams tend to run into trouble.

But despite that proximity, their impact is hard to pin down. Although their work is very valuable, outcomes don't sit in a single place and are often harder to measure.

Influence Without Visibility For The VC Platform Team

A platform team might introduce AI solutions that enhance reporting or guide better decision-making. They might help teams navigate market trends or adopt new workflows. But despite how actively involved they are, there's usually no clear line that says “This result came from platform support.”

Unlike more traditional and well-defined roles, such as investor relations, where deal flow and investor relationship building are a lot easier to trace, platform teams are spread out across different companies, different teams, and often working on diverse challenges and timelines.

For early-stage companies, the platform serves as a non-linear central hub where anything and everything can be addressed, and it is up to the platform manager to be nimble and capable of adapting to the needs of their portcos. So, a decision made today might influence business performance a year later and help the portfolio company succeed, but tracking it back to platform specialists has historically been very difficult to measure.

The influence is very real, and almost every founder acknowledges this, but it's often hard to show solid numbers that directly map to all that effort.

The hands-on platform work of a head of platform

In venture capital, platform exists to serve both founders and the firm's goals. Although the VC platform role is very different from that of an operating partner, there are similarities that both roles require, such as developing strong pattern recognition and being obsessed with creating an environment where entrepreneurship thrives.

They diverge, however, in day-to-day focus: whereas the partners focus on deal flow and investor relations, platform teams devote their time and energy to community building, hosting events, building tech stacks for their portfolio companies, and extensive coordination within their Slack groups.

The Measurement Problem

Because of this, most platform teams fall back on what they can track.

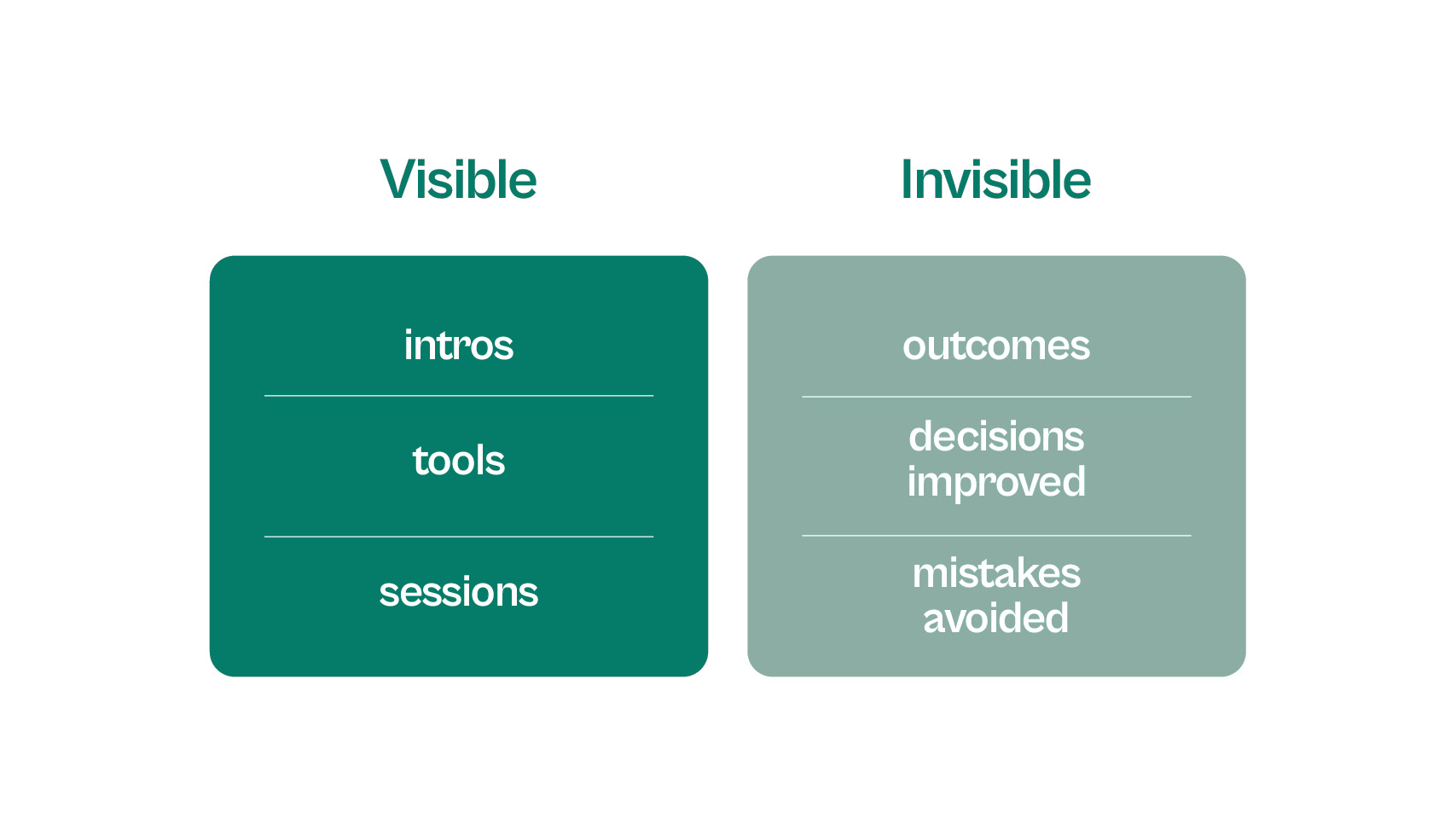

They report:

- introductions made

- tools recommended

- sessions run

- adoption of new AI tools or existing tools

This creates visibility into activity, but not clarity on outcomes. It answers the question: What did we do? But not: What actually worked?

This gap becomes more pronounced as the portfolio grows. Many organizations reach a point where they have more data, more tools, but still lack consistent insights, clear attribution, and reliable patterns.

Fragmentation Is the Real Constraint

Despite the fact that platform teams are deeply involved across the portfolio, constantly supporting companies, sharing knowledge, and helping navigate decisions which takes a lot of effort, there's still an issue of fragmentation that few have resolved.

Every company in a portfolio operates within its own environment. Different existing systems, different tools, different levels of platform maturity, and different ways of working. Even when two companies are solving the same problem, the context in which they solve it is rarely identical.

As a result, each company is generating useful signals all the time about how a vendor performs in practice, how quickly a team adopts a new workflow, and where operational friction starts to build. Over time, this adds up to a rich layer of experience across the portfolio.

But that experience doesn’t compound.

It stays local to each company, tied to specific people, decisions, and moments in time. There’s no consistent way to connect what happened in one company to what should happen in another. No shared layer that links decisions to outcomes, or patterns to future recommendations.

So even though platform teams are exposed to more information than almost anyone else in the organization, they’re still forced to operate as if each decision is happening in isolation.

They accumulate knowledge, but not learning.

The Attribution Gap

Even when outcomes are visible, understanding where they came from is rarely straightforward.

A company might adopt a new vendor and see improvements in how they operate a few months later. Another might introduce new workflows and move faster as a result. On the surface, those look like successful decisions. But when you try to trace them back to a specific input, things become less clear.

Was it the vendor itself that made the difference, or the way the team implemented it? Did the timing matter more than the tool? Would the same decision have worked as well in another company with a different team, a different stage, or a different set of constraints? These questions don’t have clear answers, and that’s the problem.

Platform teams operate in the space between influence and outcome. They help shape decisions, but they don’t control the execution. They provide context, but they don’t own the environment in which that context is applied. By the time results show up, they’ve been shaped by too many variables to isolate any single cause with confidence.

Over time, this makes it difficult to build a consistent picture of what actually works.

It also makes it harder to define meaningful platform metrics. Without a clear way to connect actions to outcomes, teams are left interpreting partial signals and inferring impact from what they can see, rather than measuring it directly. That uncertainty carries through into how they demonstrate platform effectiveness and justify ongoing platform investments.

The challenge isn’t a lack of data. It’s that the relationship between decisions and results is rarely linear. And without a way to understand that relationship more clearly, even good decisions can look indistinguishable from average ones.

Why platform teams struggle to prove impact and how they try to fix it

Faced with this ambiguity, most platform teams try to solve it using technology.

The first instinct is usually to introduce more structure. Dashboards are built to track activity. Surveys are sent out to capture feedback. Teams start documenting interactions, logging recommendations, and creating internal views of what’s happening across the portfolio.

On the surface, this feels like progress. There’s more visibility than before. More information to work with. More ways to report on what the team has been doing.

But over time, the limitations start to show. The data that gets captured tends to reflect what is easiest to measure, rather than what matters most. Activity is recorded in detail, while outcomes remain indirect. Feedback is collected, but often lacks context. Reports become more comprehensive, but not necessarily more insightful.

A vendor might appear successful because it’s widely adopted, even if the experience varies significantly between companies. A tool might look underutilized, even if it’s critical in a few high-impact areas. Without a way to connect these signals back to real outcomes, it’s difficult to interpret what any of it actually means.

Even when teams invest in better systems or layer on AI tools, the problem doesn’t automatically resolve. The underlying issue isn’t the absence of data but the lack of cohesion between them. Signals remain scattered across systems, timelines, and contexts, making it hard to see how decisions play out in practice.

As a result, reporting improves in form, but not in function. It becomes easier to describe what happened, but not to understand why it mattered. And without that understanding, the same uncertainty continues to carry forward into the next set of decisions.

Where AI Changes the Equation

AI does become a powerful solution for platform teams, but because of speed. Faster analysis, faster workflows, faster decisions haven't really been the primary limiting factor. Decisions are already being made quickly, often under pressure, and with whatever information is available at the time.

The real issue is what happens after those decisions are made. Patterns are missed, lessons don’t carry forward, and what worked in one company isn’t consistently applied in another. Over time, the same types of mistakes repeat themselves despite the teams' best efforts because the underlying signals aren’t connected.

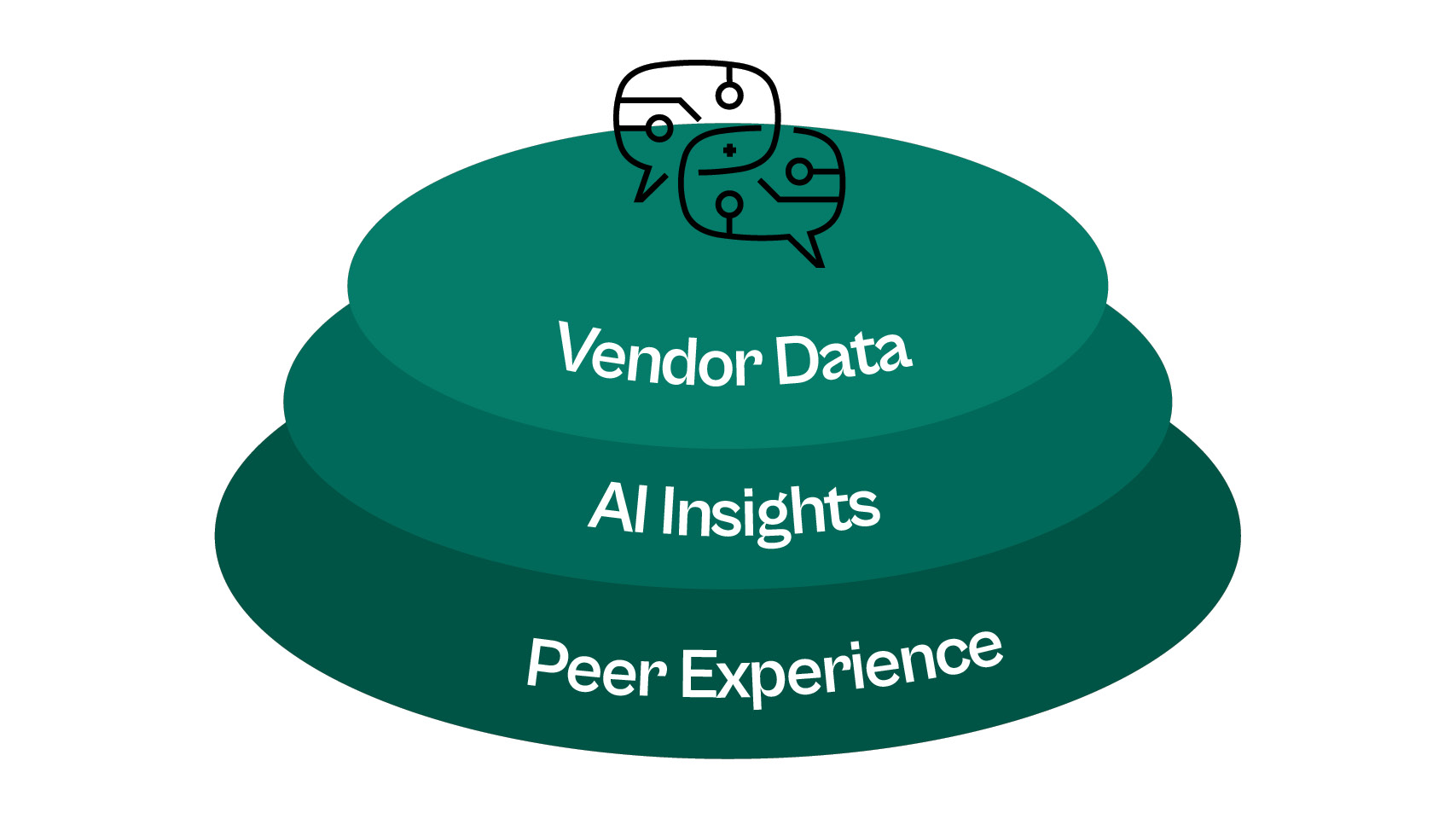

This is the gap AI can start to close. When applied properly, AI doesn’t replace judgment. It changes what teams can see. It can take scattered inputs from vendor performance, operational outcomes, internal feedback, shifts in market data, and begin to connect them in a way that reflects how decisions actually play out over time.

Instead of relying on isolated experiences, platform teams can start to observe patterns across companies. For instance, when a vendor is selected, how did they perform in practice? If a tool was adopted, where did it introduce friction or reduce it a few months in? These insights won't be tied to memory or personal preference because they will be the direct result of implementation and experience evolving as conditions change.

This is where capabilities like predictive analytics and more advanced forms of data analysis become useful for surfacing relationships that would otherwise remain hidden. Over time, this creates a different kind of visibility.

Teams are no longer working from memory alone. They’re working from a growing set of signals that reflect real outcomes across the portfolio. That makes it easier to identify where patterns hold, where they break, and where intervention actually changes the trajectory of a company.

None of this reduces complexity or uncertainty, nor does it guarantee better decisions. But it does shift the starting point. Instead of starting with fragmented information, you start from insights that are backed by real-time data collected and monitored with precision over time.

And that shift, subtle as it may seem, is what allows platform teams to move from reacting to problems toward anticipating them.

But is AI alone enough to ensure platform success?

The short answer is no. If platform teams can finally see what’s happening across the portfolio, connecting decisions to outcomes, identifying patterns, and surfacing early signals, that still doesn't solve the problem. At least not entirely.

Because visibility and trust are not the same thing.

As AI becomes more embedded in how decisions are supported, the volume of available information increases. More signals, more analysis, more model outputs that appear to offer clarity. On the surface, this looks like progress. But it also introduces a new challenge because not all signals are reliable or even valuable.

Vendors adapt very quickly, and they like to refine how they present performance, highlighting favorable benchmarks and aligning their positioning with what teams want to hear. As ai adoption grows, even the signals themselves can start to look consistent, structured, data-backed, and difficult to question at a glance. That creates a subtle but important risk.

When everything looks credible, it becomes harder to distinguish what actually is. In other words, what can be trusted as the genuinely right solution? AI can aggregate new data, identify correlations, and highlight patterns. But it cannot fully account for how that data was generated, what’s missing from it, or how closely it reflects real-world conditions. Without that context, even well-structured insights can point in the wrong direction.

For platform teams, this shifts the challenge. We're entering an era where access to better information isn't the whole story. You also need to trust the information and have a solid basis for why it is trustworthy. And that’s where a different layer becomes essential, one grounded in experience.

Building Trust And Community

That need for trust changes how platform teams think about data altogether.

If visibility alone isn’t enough, then the question becomes: where does reliable signal actually come from?

For all the progress in AI-powered systems and predictive insights, there’s still a fundamental difference between information presented and information experienced. One can be shaped, optimized, and refined. The other emerges over time, often in ways that aren’t immediately visible.

Over the years, we have worked with PE and VC platform teams and know that the best and most reliable source of information is peer-based knowledge.

Across a portfolio, companies are constantly generating signals that don’t show up in structured reporting. How a vendor behaves after implementation. Where friction appears in real workflows. How their support holds up under pressure. These are not moments that get captured systematically in dashboards or summarized in model outputs. They live in day-to-day experience.

Individually, these signals are easy to overlook. But when they are shared, they start to form a different kind of dataset, one that reflects what actually happens, not just what is claimed.

And importantly, this type of signal is much harder to manufacture.

As AI adoption increases, the ability to generate polished narratives, synthetic benchmarks, and even convincing data becomes more accessible. That doesn’t make all data unreliable, but it does raise the bar for what teams can confidently trust. Signals that come from direct experience and are validated across multiple companies become disproportionately valuable.

For platform teams, this means that instead of relying solely on structured analysis, they begin to layer in peer-based insight. It's a form of validation and a way to sense-check what the data suggests against what others have already encountered.

Over time, this creates a more durable foundation for decision making because trust isn't derived from a single source but is built over time by combining structured date and shared experience, each reinforcing the other.

How does this actually help platform teams prove impact?

Once platform teams have access to both structured data and trusted peer signals, something important starts to change. They’re no longer just observing what happened; they can begin to understand what consistently leads to a result.

Instead of reporting that a vendor was recommended or widely adopted, teams can point to how that decision played out across different companies. Not just once, but repeatedly. They can see where the same choice led to similar outcomes, where it didn’t, and what conditions influenced the difference.

Over time, this makes it possible to move beyond isolated examples.

Patterns begin to emerge. Not as assumptions, but as signals grounded in experience. A vendor might consistently reduce operational friction for companies at a certain stage. Another might introduce complexity unless specific conditions are in place. A workflow might work well in theory, but create delays in practice. That kind of visibility and insight carries significant ROI.

Platform teams are no longer trying to prove value through individual success stories or aggregated activity. They can start to show how their involvement improves the quality of decisions across the portfolio and how those decisions translate into better outcomes over time.

So instead of saying to leadership, “Let's show you what we did?” The platform head can now say, " Here's what tends to work, where and why."

And more importantly, they can now answer the question: What impact have you had lately?

That’s where proof starts to take shape because now you have a growing body of evidence that connects decisions to outcomes in a consistent way.

What Comes Next

For many platform leaders, this is where the real shift begins. The role of the platform is no longer limited to reacting to requests or providing support when asked. It becomes a deliberate platform strategy, grounded in evidence rather than intuition and designed to improve decision quality across the portfolio over time.

This matters because the expectations placed on the platform role are changing. Within any venture capital firm, the platform is increasingly expected to do more than just support portfolio companies. It’s expected to shape how those companies make decisions, how they avoid repeated mistakes, and how they move with greater confidence in uncertain environments.

That’s a new level of responsibility.

It requires platform teams to move beyond surface-level activity and focus on where they can have strategic impact across all their companies. For an early stage company, the difference is meaningful. The ability to access patterns from across a portfolio to understand what tends to work in similar conditions, and what doesn’t, can significantly reduce the cost of trial and error. It allows teams to move faster where it matters, while avoiding decisions that introduce unnecessary risk. And if we're serious about, this is how to assist portfolio companies in a sustainable, expansive way. By embedding a layer of insight into how decisions are made that can be repeated and relied upon. Over time, that changes how companies operate, how they evaluate options, and how they respond to change.

Within the broader venture capital industry, this shift also redefines the platform position itself.

Instead of being viewed as a support function, the platform becomes a source of differentiated value that directly contributes to how a firm helps its companies succeed. It creates a feedback loop between experience and execution, where each decision improves the next.

Creating long-term value then becomes possible because the teams can consistently guide better decisions across a portfolio. The firms that can do this effectively won’t just be helping their companies operate more efficiently. They’ll be helping them make better choices at critical moments, whether that’s selecting vendors, entering new markets, or adapting to changing conditions.

And in a world where companies are increasingly evaluated for both performance and operational excellence, that advantage extends beyond the portfolio itself. It shapes how those companies are perceived by investors, partners, and even potential customers.

This is also why the conversation is starting to expand across the VC platform global community into PE and other entrepreneurial ecosystems. There’s a growing recognition that the next phase of platform evolution isn’t about adding more resources. It’s about building systems that allow knowledge to compound, decisions to improve, and outcomes to become more predictable over time.

That’s the direction the platform serves. And for teams that can make that shift, the next step is clear. Build the capability to turn what you already see into something you can stand behind.

Because once you can do that, proving impact stops being a challenge and becomes part of how the platform creates value every day.

If you’re looking to move from activity to evidence, the next step is simple: start structuring the signals behind your decisions. That’s exactly what Proven is built to help you do.